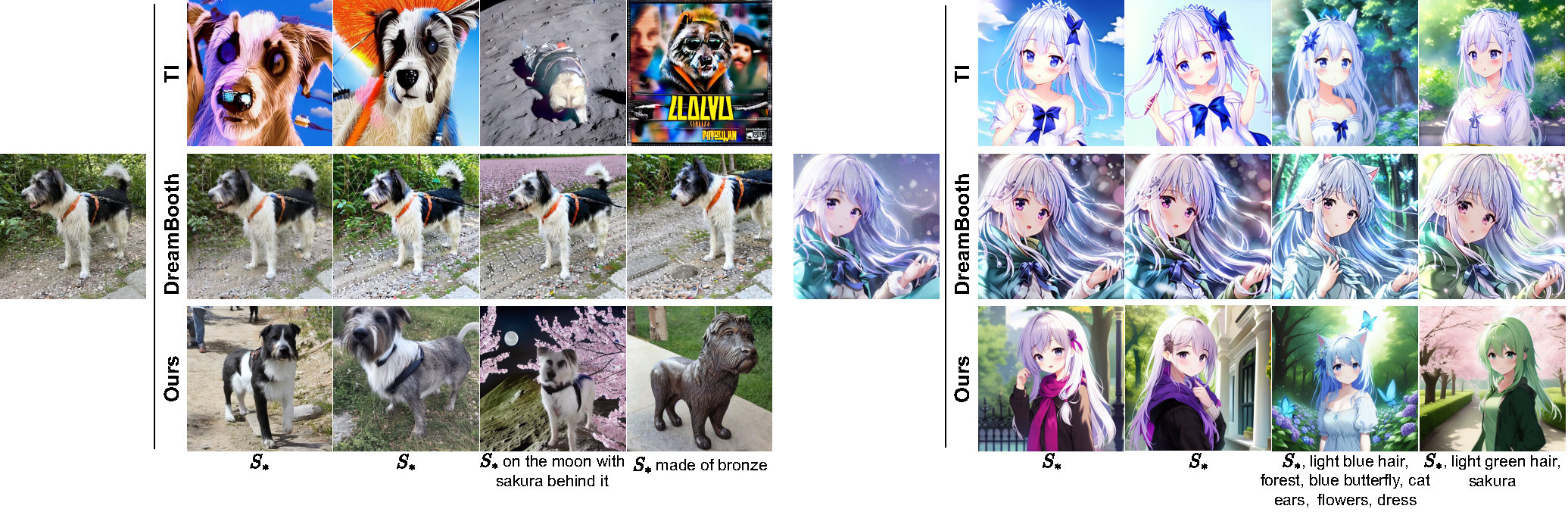

Large-scale text-to-image generation models with an exponential evolution can currently synthesize high-resolution, feature-rich, high-quality images based on text guidance. However, they are often overwhelmed by words of new concepts, styles, or object entities that always emerge. Although there are some recent attempts to use fine-tuning or prompttuning methods to teach the model a new concept as a new pseudo-word from a given reference image set, these methods are not only still difficult to synthesize diverse and highquality images without distortion and artifacts, but also suffer from low controllability.

To address these problems, we propose a DreamArtist method that employs a learning strategy of contrastive prompt-tuning, which introduces both positive and negative embeddings as pseudo-words and trains them jointly. The positive embedding aggressively learns characteristics in the reference image to drive the model diversified generation, while the negative embedding introspects in a self-supervised manner to rectify the mistakes and inadequacies from positive embedding in reverse. It learns not only what is correct but also what should be avoided. Extensive experiments on image quality and diversity analysis, controllability analysis, model learning analysis and task expansion have demonstrated that our model learns not only concept but also form, content and context. Pseudo-words of DreamArtist have similar properties as true words to generate high-quality images.

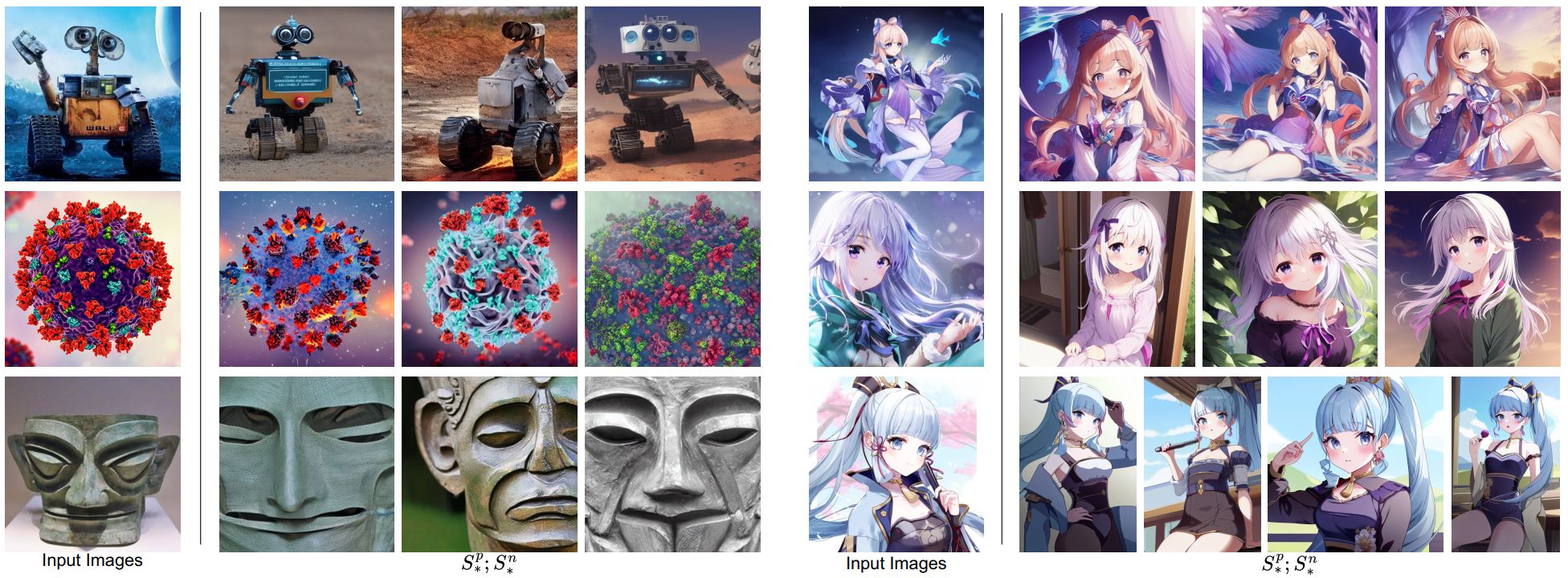

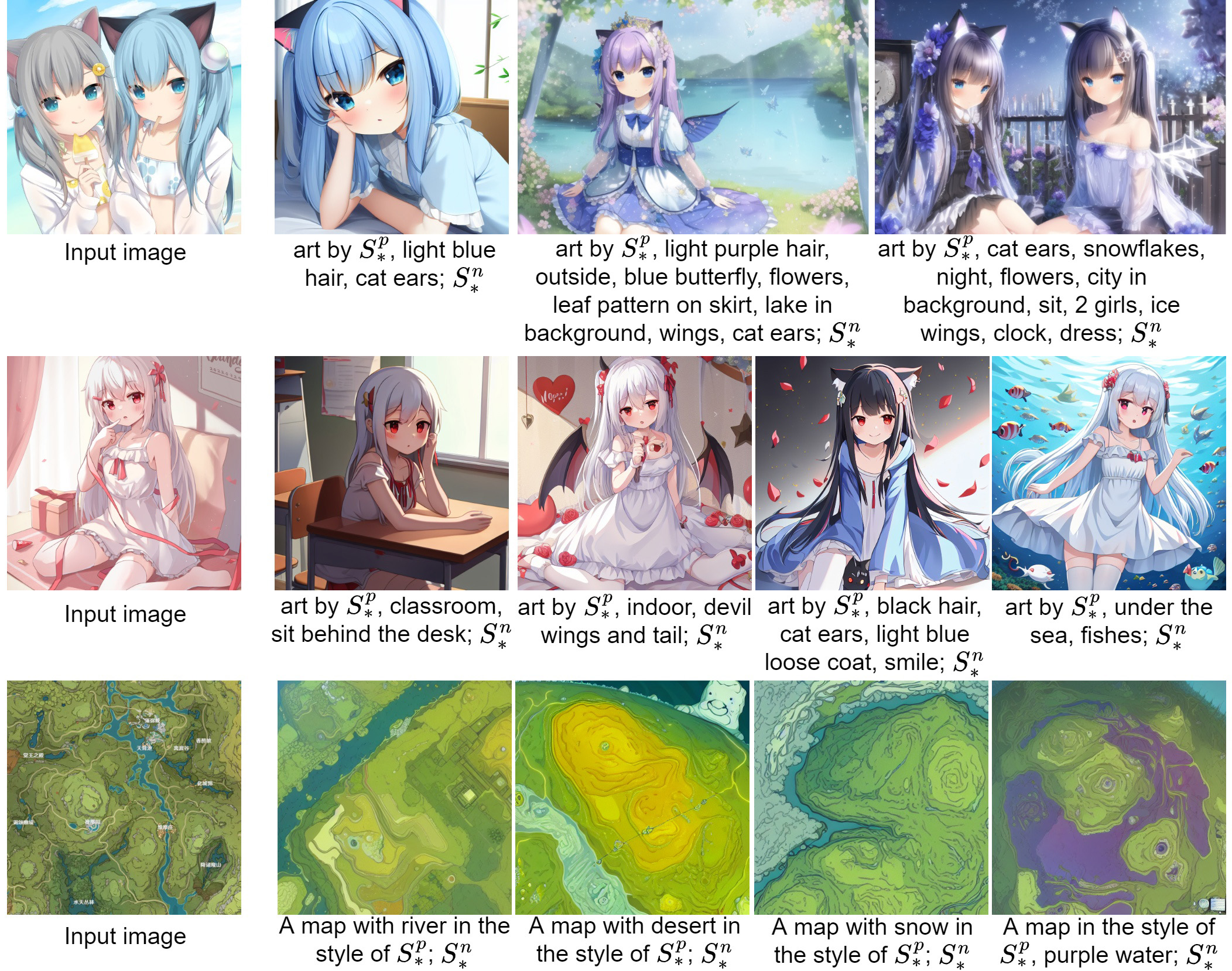

DreamArtist can teach text-to-image diffusion model to learn a new concept with only one image as a paired pseudo-words. These pseudo-words from DreamArtist can generate high quality, diverse and highly controllable images like the model's other original words. It can effectively produces high-quality and diverse images with one given reference image and embraces various new renditions in different contexts.

DreamArtist employs a learning strategy of contrastive prompt-tuning (CPT). Rather than describing the entire image with just positive embeddings in conventional prompt-tuning, our CPT jointly trains a paired positive and negative embedding ($S^p_*$ and $S^n_*$) with pre-trained fixed text encoder $\mathcal{B}$ and denoising u-net $\epsilon_\theta$. $S^p_*$ aggressively to learn the characteristics of the reference image to drive the model diversified generation, while $S^n_*$ introspects in a self-supervised manner and rectifies the inadequacies of positive prompt in reverse. Due to the introduction of $S^n_*$, $S^p_*$ does not need to be forcibly aligned with the input image. Thus $S^p_*$ does not need to attract as much attention as conventional prompt-tuning (like Textual Inversion) to align the generated and training images, which brings diversity and high controllability. Without excessive attention to $S^p_*$, the characteristics included in the additional descriptions will not be obscured and will be clearly rendered in the synthesized images. Moreover, due to the rectification from $S^n_*$, these additional characteristics will be rendered as harmonious as those from $S^p_*$.

Creations created with DreamArtist without additional text descriptions. Our DreamArtist can not only generates highly realistic images with remarkable light, shadow and detail, but also keeps the generated images highly diverse.

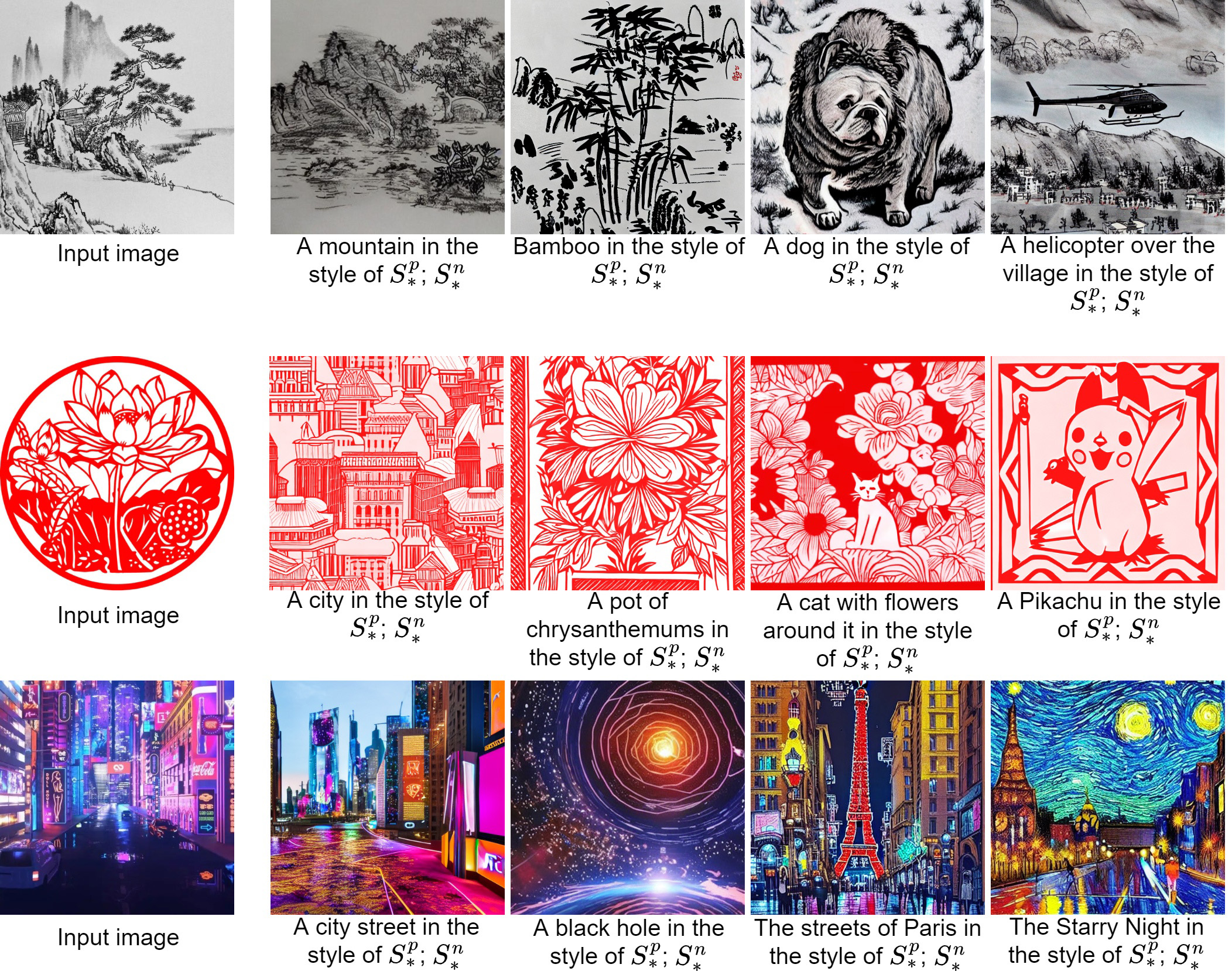

Compared to the abstract style like Vincent Van Gogh's "The Starry Night". More often than not, users need some more practical styles, for example, the style of a movie or game scene, the style of Cyberpunk or Steampunk, the style of a Chinese Brush Painting or paper-cut, or the painting style of your favorite artist. DreamArtist can learn some practical and highly refined styles pretty well. The generated images are identical to the training images in terms of colors and texture features, cloning the style of the training images remarkably.

DreamArtist can easily combine multiple learned pseudo-words, not only limited to combining objects and styles, but also using both objects or style, and generating some reasonable images. Combining multiple pseudo-words trained with DreamArtist show excellent results in both natural and anime scenes. Each component of the pseudo-words can be rendered in the generated image, even combining two radically different objects or styles. These are difficult to realize for existing methods.

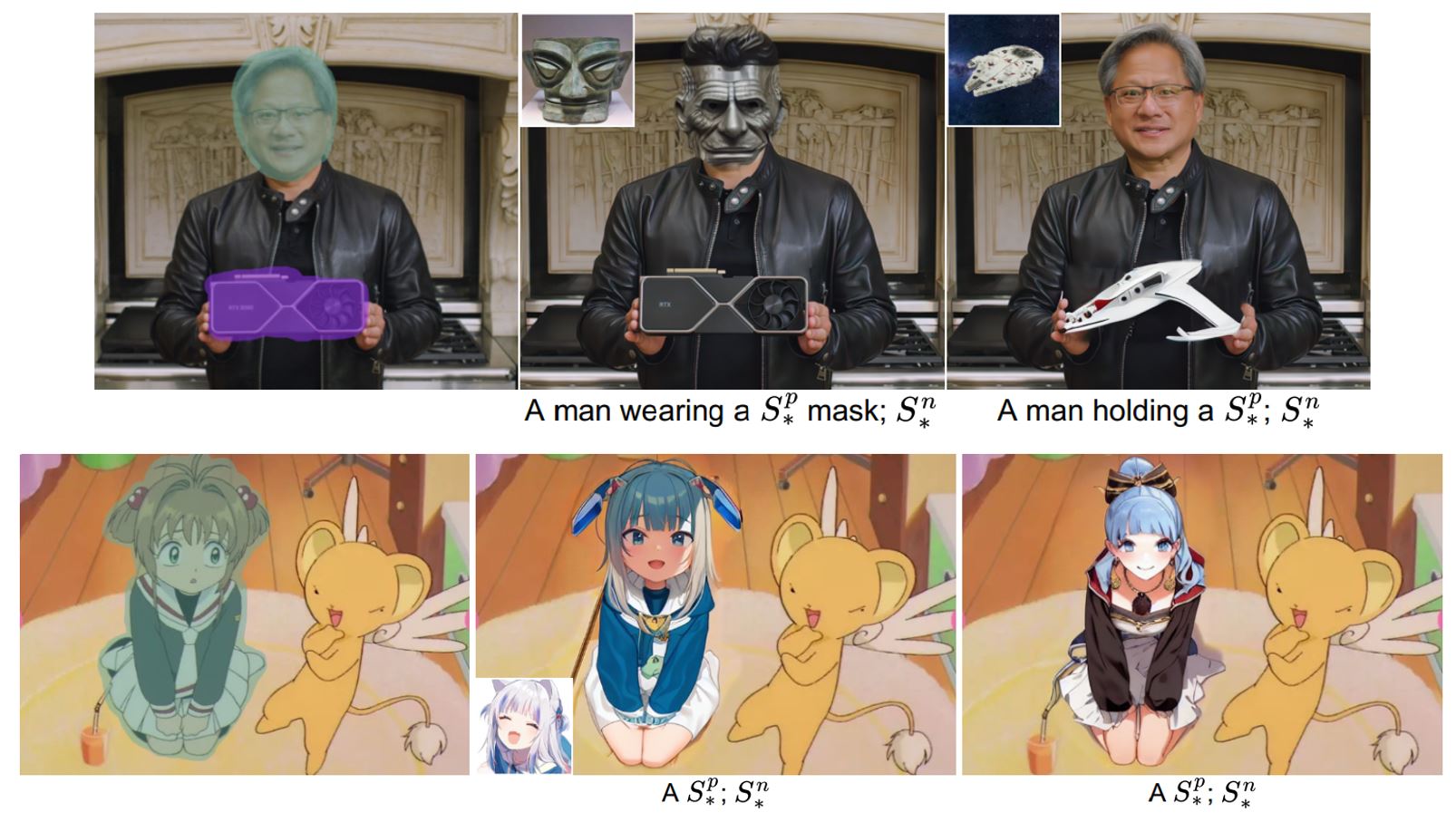

The pseudo-words learned with our method works well for text-guided image editing that follows the paradigm of Stable Diffusion. The modified areas not only show the learned form and content, but also integrate well into the environment, which looks harmonious. The performance of image editing with the learned features of our method is as effective as that of the original features from Stable Diffusion. These are difficult to realize for existing methods.